My first talk at VS Live Chicago this week (if you’re looking for my IoT talk, please click here) was based on a talk I started doing last year demonstrating fundamental unit testing techniques with NodeJS and Mocha. Since then, the code and the talk has evolved into a real API currently is early alpha at Neudesic.

In this session, we started with looking at the problem – and opportunity- with long, ugly URLs and how most URL minification APIs like bit.ly, tinyurl, etc. solve the problem today.

From there, we looked at why NodeJS is a great choice for building a Web API and proceeded to build the 3 key APIs required to fulfill the most fundamental features you’d expect from a URL shortening API including:

- Shorten

- When I submit a long, ugly URL to the create API, I should get back a neurl.

- Redirect

- When I submit a neurl to the submit API, my request should be automatically redirected.

- Hits

- When I submit a neurl to the hits API, I should get back the number of hits/redirects for that neurl.

With the API up an running on my laptop, we proceeded to create an Azure Website and push the Node app via my local Git repository, taking it live. All was not well unfortunately as initial testing of the Shorten API returned 500 errors. A quick look at the log dumps using the venerable Kudu console revealed the cause: The environment variable for the MongoDB connection string didn’t exist on the Azure Website deployment which was quickly remedied by adding the variable to the website from the Azure portal. Yes, this error was fully contrived, but Kudu is so cool.

With the API up and running, we exercised it a bit, verifying that the Redirect and Hits APIs were good to go and the scaled out the API from one to six instances with just a few clicks.

As the API continues to mature, I’ll update the talk to demonstrate how this level of indirection brought forth by virtualizing the actual URL (as with traditional services and APIs) introduces many opportunities to interact with the person consuming the API (all via URIs!) as they take the journey that starts with the click and ends with the final destination.

Without further ado, the code and more details on the talk can be found below.

Code: https://github.com/rickggaribay/neurl

Abstract: http://bit.ly/1iEEbNV

Speaking of which, if you haven’t already, why not register for Visual Studio Live Redmond or Washington DC? Early bird discounts are currently available so join me to see where we can take this API from here! hhttp://bit.ly/vslive14

I had the pleasure of presenting at Visual Studio Live! Chicago this week. Here is a recap of my second talk “From the Internet of Things to Intelligent Systems- A Developer’s Primer (if you’re looking for a recap of my “Building APIs with NodeJS on Microsoft Azure Websites” you can find it here).

While analysts and industry pundits can’t seem to agree on just how big IoT will be in the next 5 years, one thing they all agree on is that it will be big. From a bearish 50B internet connected devices by 2020, to a more moderate 75B and bullish 200B, all analysts agree that IoT is going to be big. But the reality is that IoT isn’t something that’s coming. It’s already here and this change is happening faster than anyone could have imagined. Microsoft predicts that by 2017, the entire space will represent over $1.7T in market opportunity spanning from manufacturing and energy to retail, healthcare and transportation.

While it is still very early, it is clear to see that the monetization opportunities at this level of scale are tremendous. As I discussed in my talk, the real opportunity for organizations across all industries is two-fold. First, the data and analytical insights that the telemetry (voluntary data shared by the devices) will provide will change the way companies plan, execute and the rate at which they will adapt and adjust to changing conditions in their physical environments. This brings new meaning to decision support and no industry will be left untouched in this regard. These insights will lead to intelligent systems that are capable of taking action at a distance based either on pre-configured rules that interpret this real-time device telemetry or other command and control logic that prompts communication with device.

As a somewhat trivial but useful example, imagine your coffee maker sending you an SMS asking you permission to approve a descaling job. Another popular example of a product that’s already had significant commercial success is the Nest thermostat. Using microcontrollers very similar to the ones I demonstrated, these are simple examples that are already possible today.

Beyond the commercial space, another very real example is a project my team led for our client that involved streaming meter and sensor telemetry from a large downtown metroplex enabling real-time, dynamic pricing, up-to-the-minute views into parking availability and significant cost and efficiency savings by adopting a directed enforcement approach to ticketing.

So, IoT is already everywhere and in many cases, as developers we’re already behind. For example, what patterns do you use for managing command and control operations? How do you approach addressability? How do you overcome resource constraints on devices ranging in size from drink coasters to postage stamps? How do you scale to hundreds and thousands of devices that are sharing telemetry data every few seconds? What about security?

While 75 minutes is not a ton of time to tackle all of these questions, I walked the audience through the following four scenarios based on the definition of the Command message pattern in the "Service Assisted Communications" paper that Clemens Vasters (@clemensv) at Microsoft published this February:

1. Default Communication Model with Arduino - demonstrates the default communication model whereby the Arduino provides its own API (via a Web Server adapted by zoomcat). Commands are sent from the command source to the device in a point to point manner.

2. Brokered Device Communication with Netduino Plus 2 - demonstrates an evolution from the point to point default communication model to a brokered approach to issuing device commands using MQTT. This demo uses the excellent M2MQTT library by WEB MVP Paolo Patierno (@ppatierno) as well as the MQTT plug-in for RabbitMQ (both on-premise and RabbitMQ hosted).

3. Service-Assisted Device-Direct Commands over Azure Service Bus - applies the fundamental service assisted communications concepts evolving the brokered example to leverage Azure Service Bus using the Device Direct pattern (as opposed to Custom Gateway). As with the brokered model, the device communicates with a single endpoint in an outbound manner, but does not require a dedicated socket connection as with MQTT implicitly addressing occasionally disconnected scenarios, message durability, etc.

In the final, capstone demo, “Service-Assisted Device-Direct Commands on the Azure Device Gateway”, I demonstrated the culmination of work dating back to June 2012 (in which Vasters first shared the concept of Service-Assisted Communications) which is now available as a reference architecture and fully functional code base for customers ready to adopt an IoT strategy today:

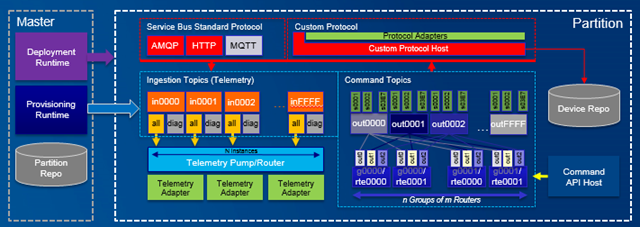

As a set up for the demo, I discussed the Master and Partition roles. The Master role manages the deployment of partitions and the provisioning of devices into partitions using the command line tools that ship with the code base.

In the demo, I provided a look at the instance of Reykjavik deployed on our Neudesic Azure account including the Master and Partition roles. I showed the Azure Service Bus entities for managing the ingress and egress of device messaging for command, notification, telemetry and inquiry traffic (The Device Gateway is currently capable of supporting 1024 partitions with each partition supporting 20K devices today) as well as the storage accounts responsible for device registration and storing partition configuration settings.

I also discussed the protocols for connecting the device to the gateway (AMQP and HTTP are in the box and an MQTT adapter is coming very soon) and walked through the Telemetry Pump which dispatches telemetry messages to the registered telemetry adapter (Table Storage, HD Insight adapters, etc.)

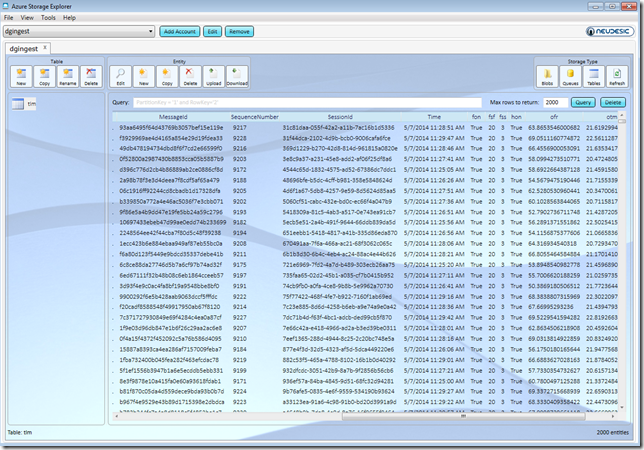

The demo wrapped up with a Reykjavik device sample consisting of a Space Heater emulator that I registered on the Neudesic instance of the Device Gateway to acquire it’s ingress and egress endpoints, initialize fan speed, rpm and begins to send telemetry messages to it’s outbox every 30 seconds (fully configurable).

The beauty of the demo is in its simplicity. Commands are received via the device’s inbox and telemetry is shared via it’s outbox. The code is simple C# with no heavy frameworks which is really key to running on devices with highly constrained resources:

1: void SendTelemetry()

2: {

3: this.lastTelemetrySent = DateTime.UtcNow;

4:

5: var tlm = new BrokeredMessage

6: {

7: Label = "tlm",

8: Properties =

9: {

10: {"From", gatewayId},

11: {"Time", DateTime.UtcNow},

12: {"tiv", (int) this.telemetryInterval.TotalSeconds},

13: {"fsf", this.fanspeedSettingRpmFactor},

14: {"fss", this.fanSpeedSetting},

15: {"fon", this.fanOn},

16: {"tsc", this.temperatureSettingC},

17: {"hon", this.heaterOn},

18: {"ofr", this.lastObservedFanRpm},

19: {"otm", this.lastObservedTemperature}

20: }

21: };

22:

23: tlm.SessionId = Guid.NewGuid().ToString();

24:

25: this.sender.SendWithRetryAsync(tlm);

26: }

A screenshot from the telemetry table populated by the Reykjavik Table Storage adapter is shown in the Neudesic Azure Storage Explorer below:

As I discussed, this is an early point in a journey that will continue to evolve over time, but the great thing about this model is that everything I showed is built on Microsoft Azure so there’s nothing to stop you as a developer form building your own Custom Protocol Adapter and this is really the key to the thinking and philosophy around Device Gateway.

It is still very early in this wave and every organization is going to have different devices, protocols and requirements. So while you’ll see investments in the most common protocols as you can already see like (AMQP, MQTT, and CoAp) the goal is to make this super pluggable and fully embrace custom protocol gateways that just plug in.

As with the Protocol Adapters, there’s nothing to stop you from building your own Telemetry adapter or to use Azure Service Bus or BizTalk Services to move data on premise, etc.

Still with me? Great. The links to my demo samples and more details on the talk are available here:

Abstract: http://bit.ly/vsl-iot

Demo Samples: https://github.com/rickggaribay/IoT

Oh, and if you missed the Chicago show, don’t worry! I’ll be repeating this talk in Redmond and Washington DC, so be sure to register now for early bird discounts: http://bit.ly/vslive14